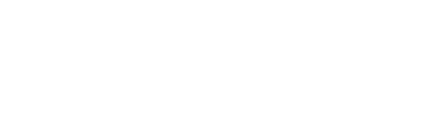

In this step, we are going to take a look at the interaction design and the language model of a simple choose-your-own-adventure game. The game will consist of two interactions, both having two options.

- Initial Interaction: Blue Door or Red Door?

- Second Interaction: Yes or No?

- Create the Interaction Model on Amazon Alexa

- Create the Language Model on Dialogflow

- Next Steps

A note on cross-platform development: We will guide you through all the necessary steps to develop for both Amazon Alexa and Google Assistant (with Dialogflow). However, it's not necessary to build for both platforms right away. We usually suggest to start with Alexa and then port the experience over to Google Assistant.

Initial Interaction: Blue Door or Red Door?

We already talked about the player's situation in the project description: The user opens the adventure game and is introduced to the story. You don't know where you are and you have two options: blue door or red door. This is the first interaction we're creating in this section.

As we learned in Project 1: Hello World, intents can be used to map what a user says to the meaning behind the phrase or sentence. See Project 1 Step 2: Introduction to Language Models for a refresher on intents.

For this game's first step, you can map out the interaction with two intents (we will consider other solutions in a later step): A 'BlueDoorIntent' and a 'RedDoorIntent'.

Both intents have similar example phrases (this might cause problems that we will deal with later). For example, here are the ones for the 'BlueDoorIntent':

We're going to create these intents below. But first, let's take a look at the second step.

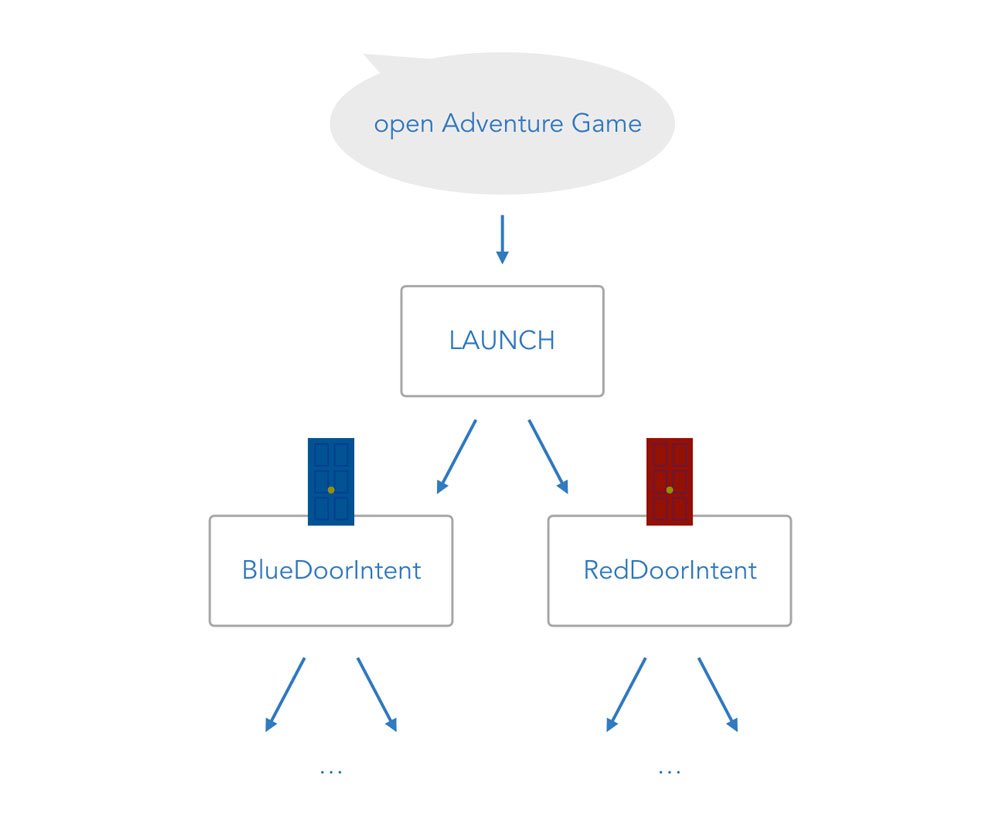

Second Interaction: Yes or No?

No matter which door the players choose, they will be asked a question they should answer with yes or no in the next step.

As you can see below, there are now 4 possible scenarios for the game:

Let's go ahead and create the interaction model. For the sake of fast prototyping, we will focus on Amazon Alexa for each step. However, we're also going to include information for you to bring this game to Google Home and Google Assistant.

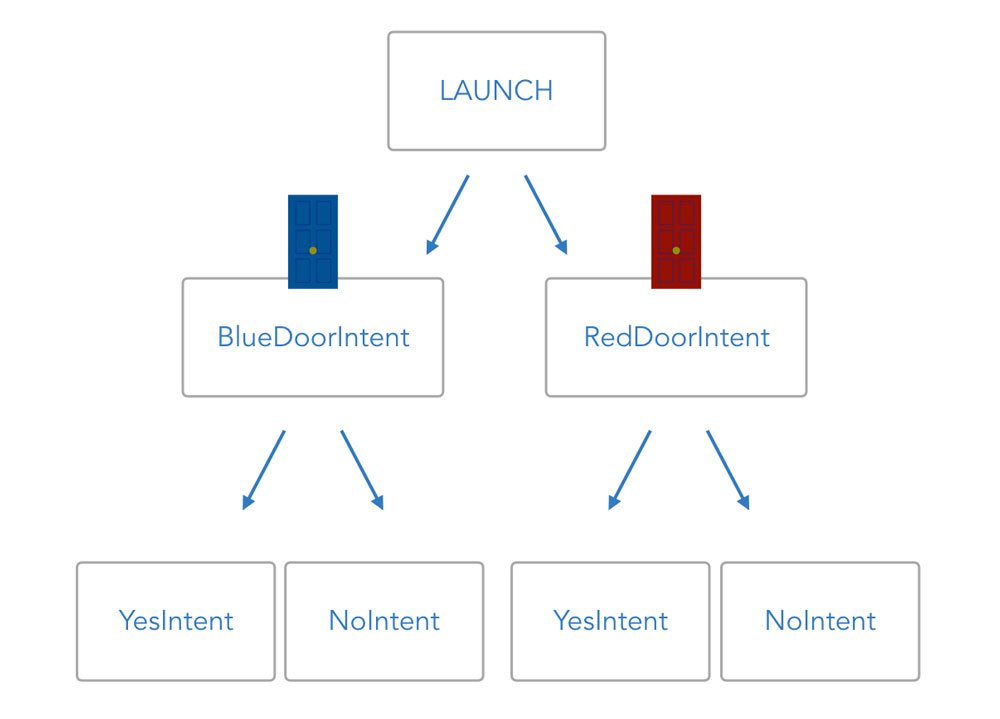

Create the Interaction Model on Amazon Alexa

By now, we already know how the creation of a Skill project on the Amazon Developer Portal works. You can learn about it in more detail in Project 1 Step 3: Create a Project on the Amazon Developer Portal.

Let's create a Skill called "Adventure Game" with the same invocation name:

For the interaction model, we're going to add 4 intents:

- 2 intents for the first step: BlueDoorIntent and RedDoorIntent

- 2 intents for the second step: AMAZON.YesIntent and AMAZON.NoIntent

Alexa: BlueDoorIntent and RedDoorIntent

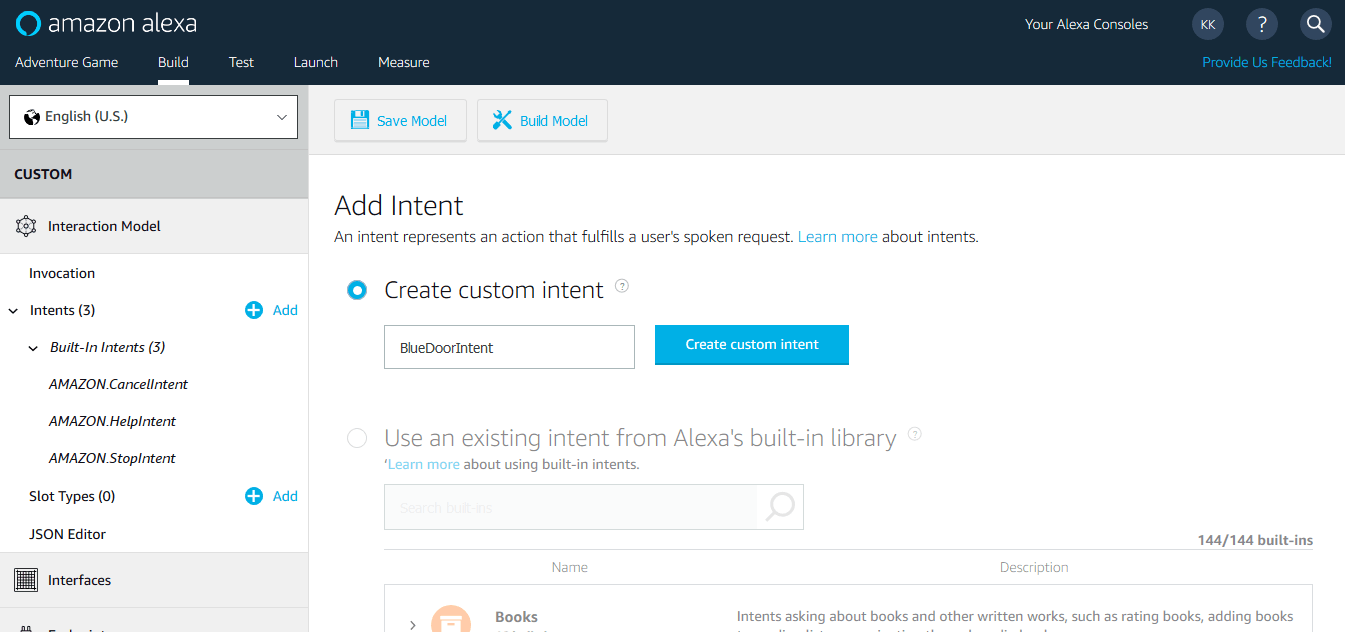

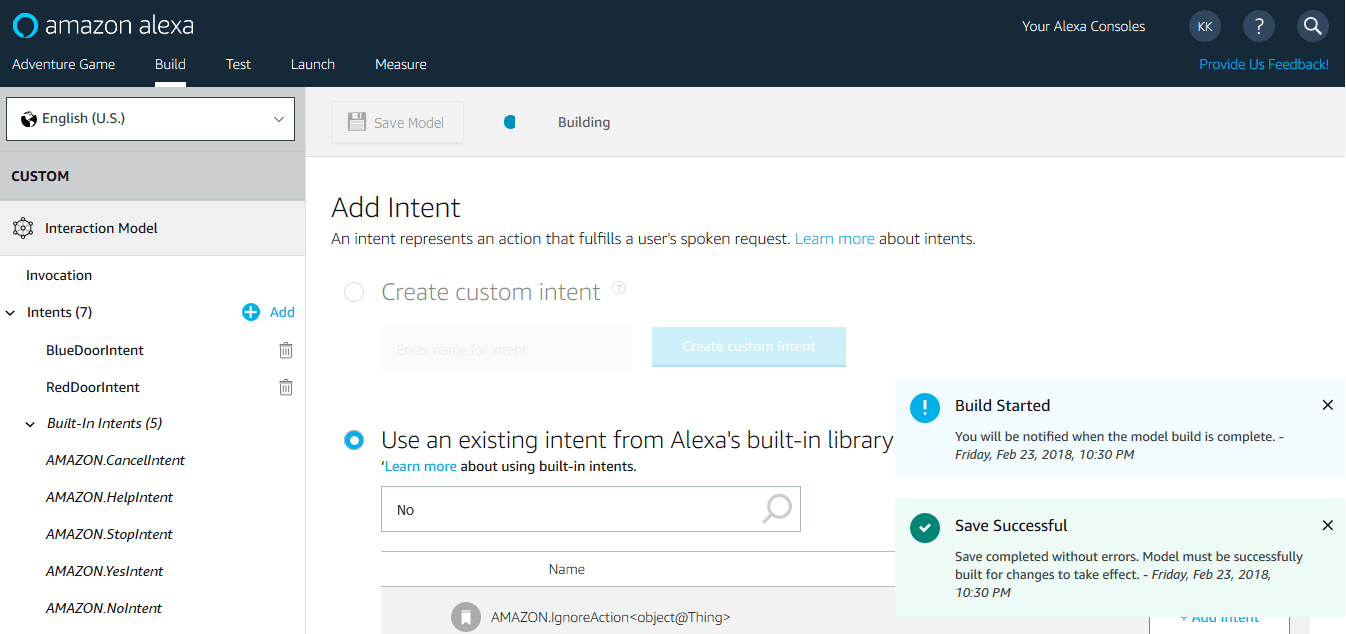

In the Skill Builder Beta, we're adding two custom intents BlueDoorIntent and RedDoorIntent.

Again, these are sample utterances for BlueDoorIntent:

This is how it looks like in the Skill Builder:

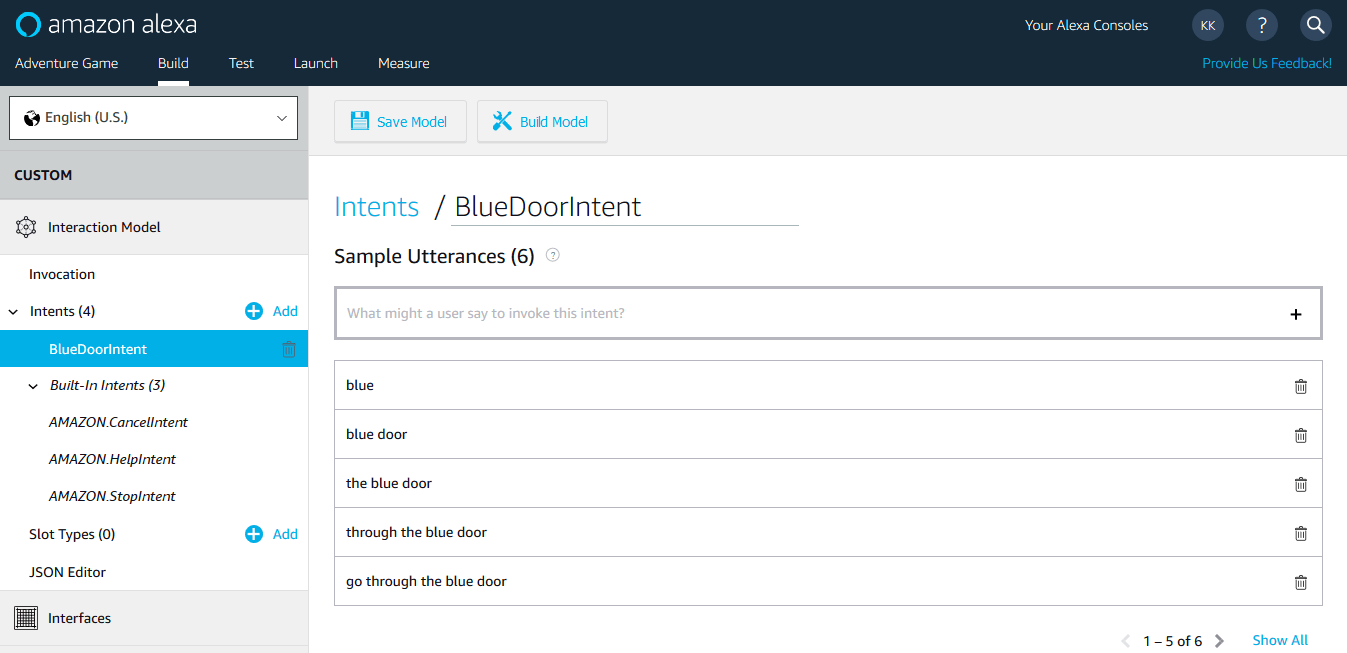

The RedDoorIntent looks similar:

We will later see that this is not the ideal way to create two almost identical intents. But for now, we're fine with this.

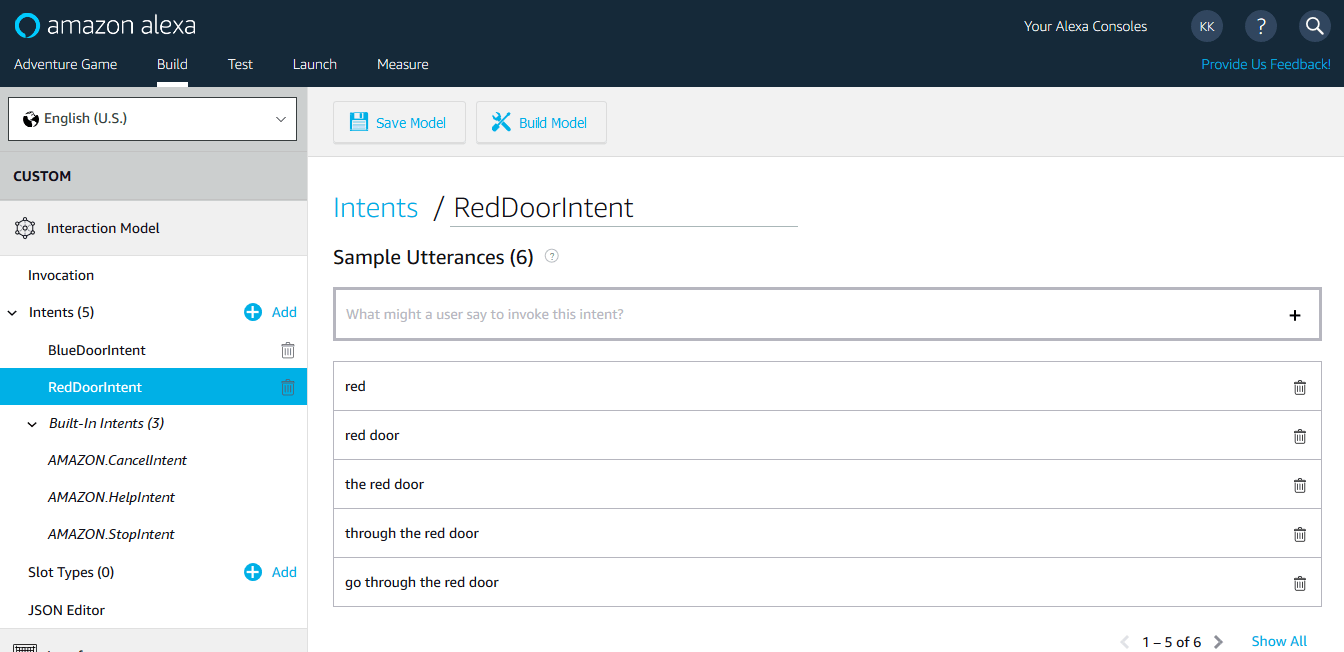

AMAZON.YesIntent and AMAZON.NoIntent

In the next step, we want to add support for a user replying with "Yes" or "No".

For this, Amazon offers so-called built-in intents that are already trained with utterances, so you don't have to.

For Yes and No, we're adding AMAZON.YesIntent and AMAZON.NoIntent.

We will take a deeper look into those Yes and No intents in step 4: Built-in Intents and intentMap.

We're done with the interaction model for now. Click "Build Model":

After a bit, it should be successfully created.

You can now either go to the next step or take a look how a language model is created on Dialogflow to use with Google Assistant.

Create the Language Model on Dialogflow

Creating a language model for Google Assistant is a little different compared to Alexa, where it's done all on one platform, the Amazon Developer Portal. As we learned in Project 1 Step 4: Create a Project on Dialogflow and Google Assistant, it is common to use Dialogflow to do the natural language understanding for Google Assistant, and use the built-in integration to connect both.

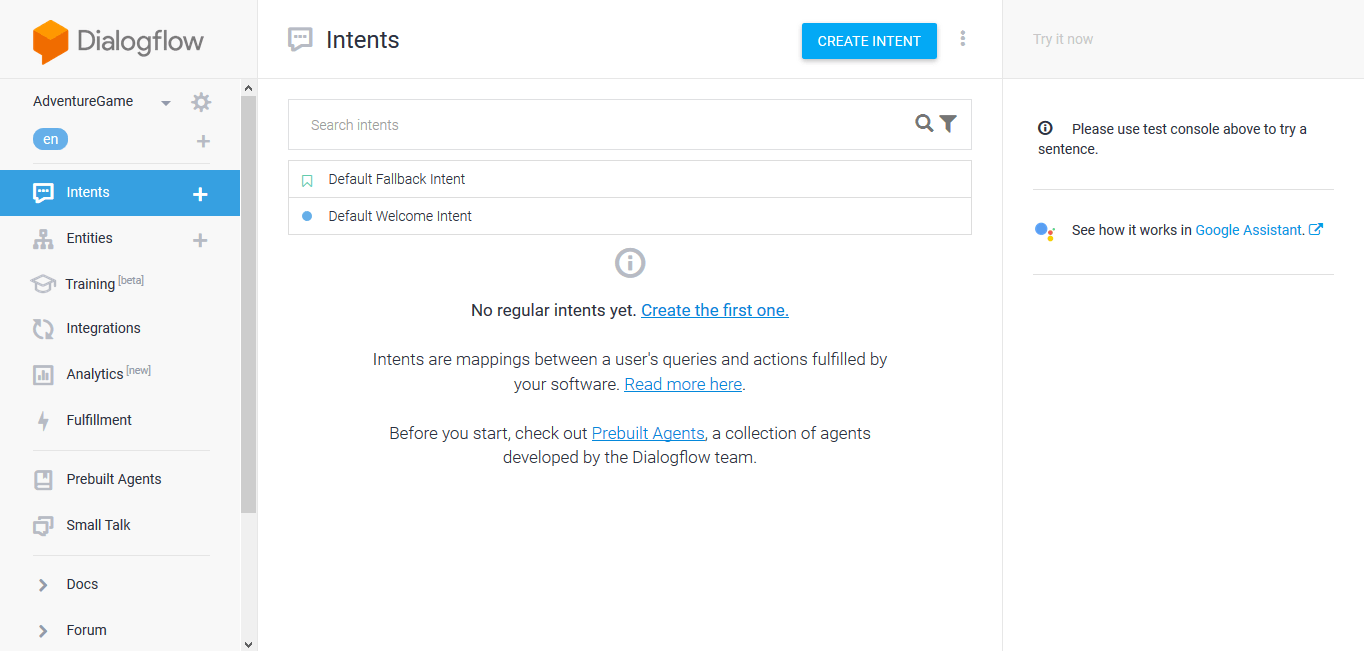

Let's go to the Dialogflow Console and create a new agent called AdventureGame. This is what the screen of the agent including default intents looks like:

We’re going to add 4 intents to this agent:

- 2 intents for the first step: BlueDoorIntent and RedDoorIntent

- 2 intents for the second step: YesIntent and NoIntent

Dialogflow: BlueDoorIntent and RedDoorIntent

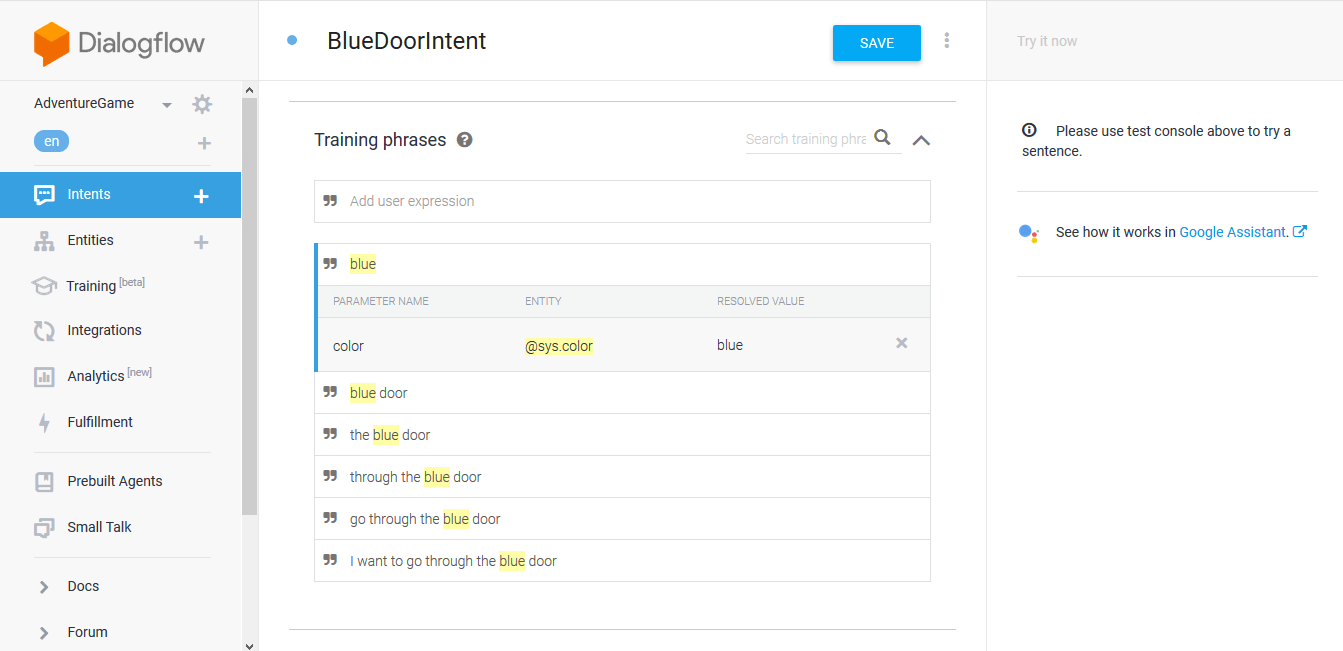

Just like we did for Amazon Alexa, we're creating an intent for each door, for example with these phrases for BlueDoorIntent:

This is how it looks like on Dialogflow:

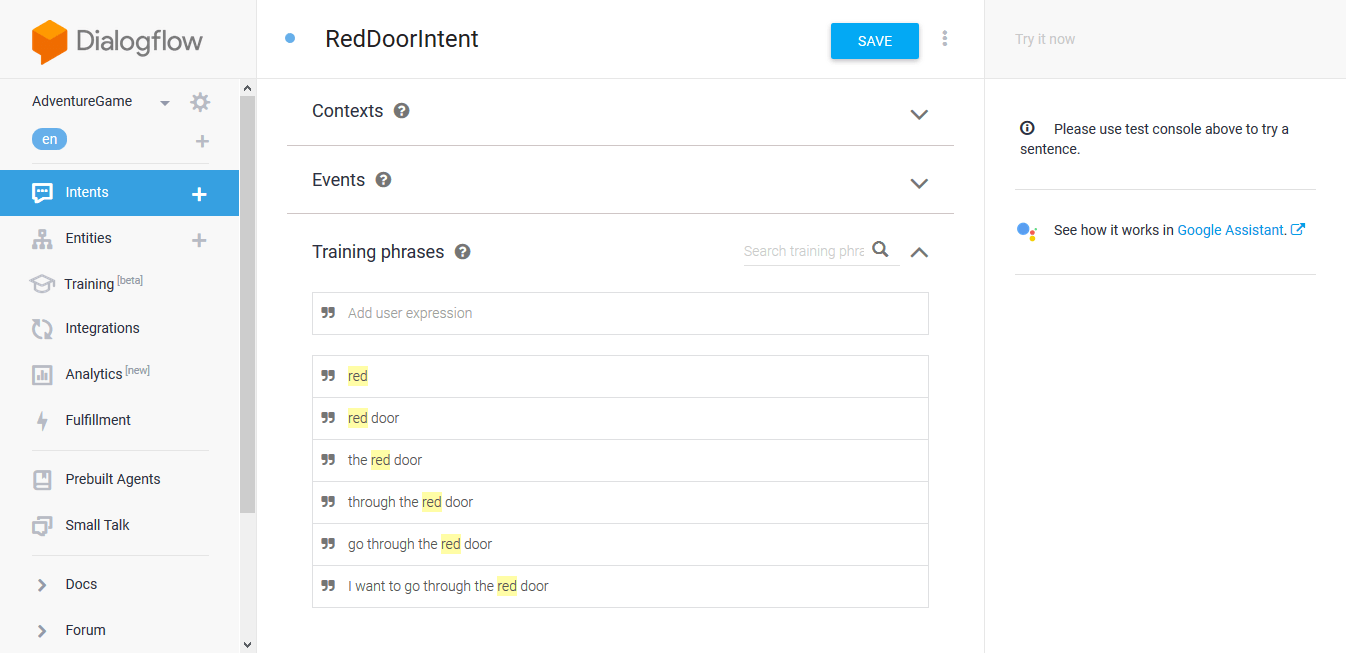

Notice something? Dialogflow automatically recognizes blue as a color. We will make use of this in a later step. For now, let's also create a RedDoorIntent:

YesIntent and NoIntent

For Yes and No on Amazon Alexa, we could rely on so-called built-in intents AMAZON.YesIntent and AMAZON.NoIntent. On Dialogflow, we need to create them ourselves.

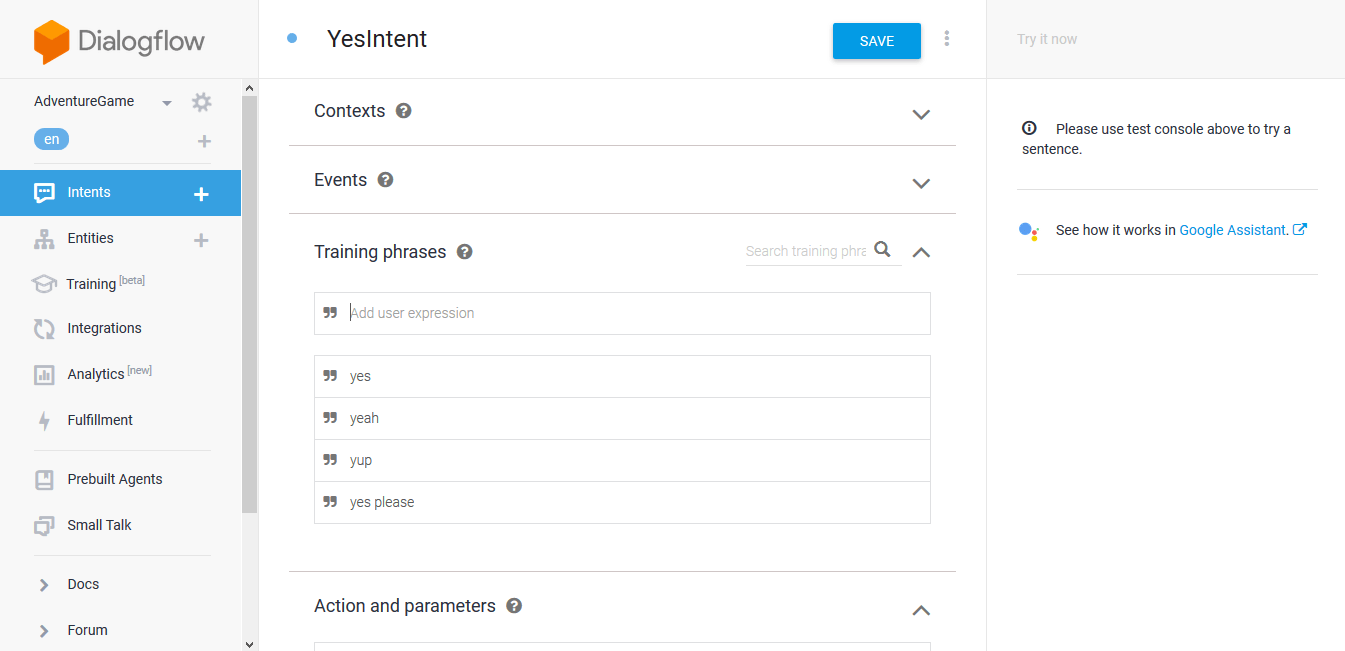

We're just going to add a few sample phrases like yes please, yes, or yup. This is was YesIntent looks like:

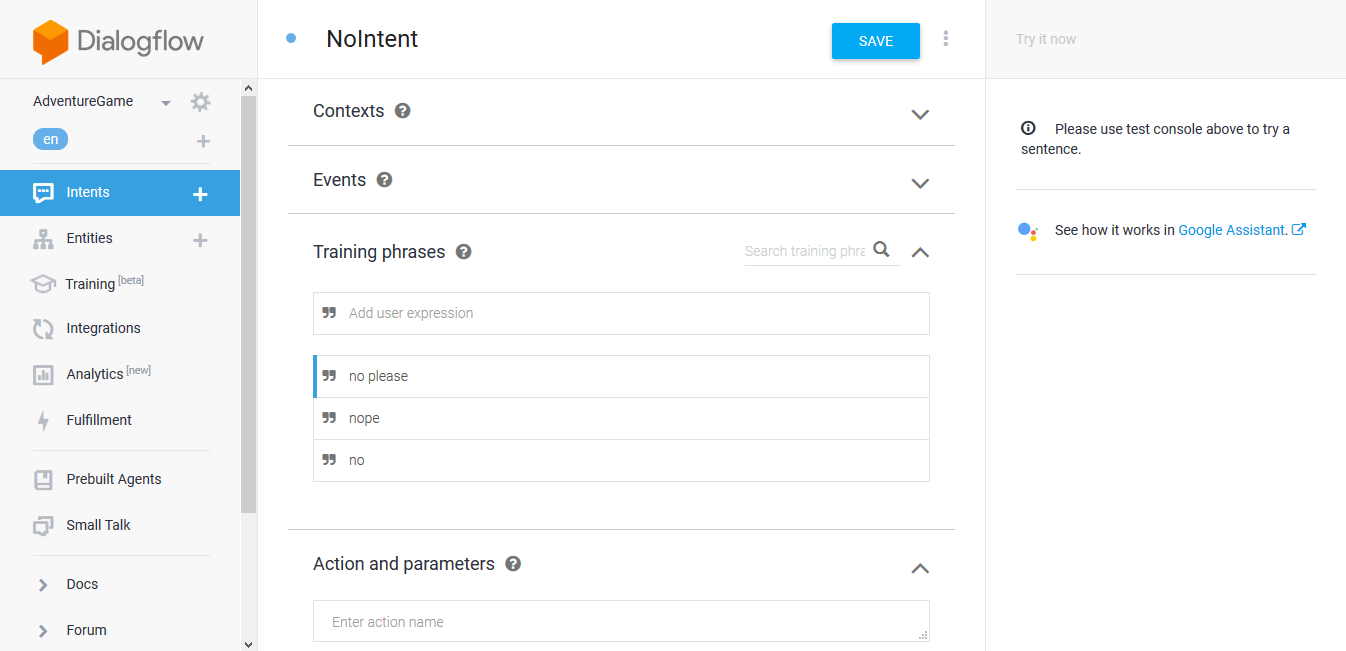

And this is NoIntent:

Done. The agent trains itself automatically every time you save an intent.