Jovo Model

The Jovo Model is a language model abstraction layer that works across NLU providers. It allows you to maintain a language model in a single source of truth and then translate it into different platform schemas like Amazon Alexa, Google Assistant, Dialogflow, Rasa NLU, Microsoft LUIS, and more.

- Introduction

- Supported Platforms

- Model Structure

- Using the Jovo Model with the Jovo CLI

- Using the Jovo Model npm Packages

- Contributing

Introduction

The Jovo Framework works with many different platforms and natural language understanding (NLU) providers that turn spoken or written language into structured meaning. Each of these services have their own schema that needs to be used to train their models. If you want to use more than one provider, designing maintaining the different language models can become a tedious task.

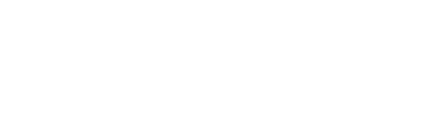

The Jovo Model enables you to store language model information in a single JSON file. For Jovo projects, you can find the language model files in the /models folder:

The Jovo Model is mainly used by the Jovo CLI's build command which turns the files into platform specific models like Alexa Interaction Models and Dialogflow agents. These resulting models can then be deployed to the respective platforms and trained there. Learn more here: Using the Jovo Model with the Jovo CLI.

We chose to open source this repository to provide more flexibility to Jovo users and tool providers. You can directly access the Jovo Model features from your code and make transformations yourself. Learn more here: Using the Jovo Model npm Packages.

Supported Platforms

The Jovo Model supports the following NLU providers (see the packages folder in the jovo-model repository):

- Amazon Alexa

- Amazon Lex

- Google Dialogflow

- Google Assistant Conversational Actions (alpha)

- Microsoft LUIS

- Rasa NLU (alpha)

- NLP.js (alpha)

Model Structure

Every language you choose to support will have its very own language model (en-US, de-DE, etc.).

Each locale is represented by its own model. For example, the en-US.json in the Jovo "Hello World" template looks like this:

The Jovo Model consists of several elements, which we will go through step by step in this section.

Invocation

The invocation is used by some voice assistant platforms as the "app name" to access the app (see Voice App Basics in the Jovo Docs).

It is possible to add platform-specific invocations like this:

Currently, this element is supported by Alexa Skills and Google Assistant Conversational Actions. If you use Google Assistant with Dialogflow, you need to set the invocation name manually in the Actions on Google console.

Intents

Intents can be added to the JSON as objects that include:

This is how the MyNameIsIntent from the Jovo "Hello World" sample app looks like:

Intent Name

The name specifies how the intent is called on the platforms. We recommend using a consistent standard. In our examples, we add Intent to each name, like MyNameIsIntent.

Phrases

This is an array of example phrases that will be used to train the language model on the respective NLU platforms.

Some providers use different names for these phrases, for example utterances or "user says."

Inputs

Often, phrases contain variable input such as slots or entities, as some NLU services call them. In the Jovo Model, they are called inputs.

Inputs consist of a name and a type (learn more in the Input Types section). For example an intent with phrases like I live in {city} would come with an input like this:

You can also choose to provide different input types for each NLU service:

You can either reference input types defined in the inputTypes array array, or reference built-in input types provided by the respective NLU platforms (like AMAZON.US_FIRST_NAME for Alexa).

Input Types

The inputTypes array lists all the input types that are referenced as inputs inside intents.

Each input type contains:

Input Type Name

The name specifies how the input type is referenced. Again, we recommend to use a consistent style throughout all input types to keep it organized.

Values

This is an array of elements that each contain a value and optionally synonyms. With the values, you can define which inputs you're expecting from the user.

Synonyms

Sometimes different words have the same meaning. In the example above, we have a main value New York and a synonym New York City.

To learn more about how these input values and synonyms can be accessed in your Jovo app, take a look at the Jovo Docs: Routing > Input.

Platform-specific Elements

Some intents or input types may be needed for just some platforms. You can define them as additional elements as shown for Alexa in the example below:

The format of the elements inside the alexa object from above is the original structure of the Alexa Interaction Model. For example, phrases (Jovo Model naming) are called samples.

For more information, see the respective platform docs referenced here.

Using the Jovo Model with the Jovo CLI

In regular Jovo projects, the Jovo Model is translated into different NLU formats and then deployed by using the Jovo CLI.

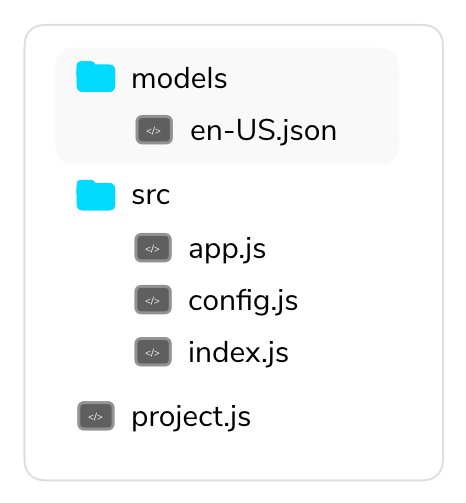

The workflow consists of three elements:

modelsfolder that stores the Jovo Model filesplatformsfolder that consists all generated filesproject.jsfile that contains all project configuration

Models Folder

The models folder contains all the language models. Each locale (like en-US, de-DE) has its own JSON file.

Platforms Folder

The platforms folder includes all the information you need to deploy the project to the respective developer platforms like Amazon Alexa and Google Assistant.

At the beginning of a new project, the folder doesn't exist until you either import an existing platform project with jovo get, or create the files from the Jovo Model with jovo build.

We recommend to use the project.js file (see next section) as the single source of truth and add the platforms folder to the .gitignore to avoid conflicts (like Alexa Skill IDs) if you're working on a project with a team.

Project Configuration

The project.js file contains all the project specific information that is used by the Jovo CLI to generate the platforms folder with the build command.

Learn everything about Jovo project configuration in the

project.jsdocs.

The file specifies which NLU service should be used by for which platform:

If you want to add more configurations, you can turn the nlu element into an object, like this for Alexa:

You can also add more transformations. For example, if you have a default en.json file in your models folder and wish for it to be duplicated into en-US and en-CA interaction models for Alexa, you can do it like this:

Using the Jovo Model npm Packages

You can download the package like this:

Model Conversions

By using this package in your code, you can convert the data from one platform schema to another.

Jovo Model to Platform Schema

To turn a Jovo Model into a platform model like Alexa, you can do the following:

Platform Schema to Jovo Model

If you want to turn a platform model like Alexa into the Jovo Model format, do this:

Platform Schema A to Platform Schema B

You can also use the package to turn one platform schema into another, e.g. Alexa into Dialogflow:

Updating the Model

The Jovo Model also allows to extract, manipulate, and delete data.

Contributing

Feel free to add more NLU providers via pull requests. Each platform implements the following methods of the jovo-model core package: